AI Agents - Lesson 9: Agent Performance and Evals

How to measure and create evals.

We are almost at the end of this course, and these last few lessons are for those of you that want to go beyond personal agents. Let's say you built a customer support agent at your company. You test it with a few questions.

“What’s your return policy?” - Good answer.

“How do I track my order?” - Looks great.

“Can I get a refund?” - Perfect.

You launch it feeling confident. Two weeks later, a customer complains that the agent told them they could return items after 90 days when your policy is 30 days. Another says it gave them someone else’s order tracking number. A third got frustrated because it kept recommending products instead of answering their simple question.

How did this happen? You tested it! You looked at a handful of responses, thought “this seems fine,” and shipped it. That’s hoping for the best, not an evaluation.

Evals are how you systematically measure whether your AI agent actually works. They’re the difference between “it seems fine” and “I know it’s fine because I tested 100 scenarios and it passed 95 of them.” Without evals, you’re driving blind. With them, you have a dashboard showing exactly what’s working and what needs fixing.

This lesson gives you a complete framework for evaluating AI agents, borrowed from how the best AI product teams operate. No coding required, just clear thinking about what matters and how to measure it.

The Driving Test Analogy

Evaluating AI is very different than testing traditional software. You are not checking a single correct output. Aman Khan has been writing and teaching people about Evals and I first read an article from him on Lenny's newsletter and a lot of things that I have been doing related to Evals use his concepts. He introduced this analogy that Evals are closer to performing a driving test. You observe how the system behaves across many situations and judge whether it handles real-world complexity in a safe and reliable way.

Good drivers need three things:

1. Awareness – Can they correctly interpret signals and react appropriately to changing conditions?

2. Decision-making – Do they reliably make the correct choices, even in unpredictable situations?

3. Safety – Can they consistently follow directions and arrive at the destination without going off the rails?

Your AI agent needs the same three things:

1. Awareness – Does it understand what users are actually asking?

2. Decision-making – Does it choose the right actions and provide accurate responses?

3. Safety – Does it avoid harmful outputs, protect data, and stay within boundaries?

“Just as you wouldn’t let someone drive without passing their test, you shouldn’t let an AI agent handle real users without passing evals” says Aman. Yet most teams ship agents after a few manual tests and hope for the best. Let’s change that.

What Are Evals?

Evals (evaluations) are systematic tests that measure how well your AI agent performs against specific criteria.

They’re similar to unit tests in software development, but with important differences:

Another good a analogy from Aman, “Traditional software testing is like checking if a train stays on its tracks”:

Deterministic (same input = same output)

Clear pass/fail

Binary outcomes

Predictable behavior

AI evals are like evaluating a driver in city traffic:

Non-deterministic (same question might get slightly different answers)

Quality on a spectrum (not just pass/fail)

Subjective criteria (helpfulness, tone, relevance)

Probabilistic behavior

Example:

Traditional test:

Function: calculate_tax(100)

Expected: 10

Actual: 10

Result: PASS ✓AI eval:

Input: "What's your return policy?"

Expected: Accurate policy, professional tone, under 100 words

Actual: "You can return items within 30 days of purchase with receipt..."

Criteria:

- Factually correct? ✓

- Professional tone? ✓

- Appropriate length? ✓

Result: PASS ✓The difference: AI evals often measure multiple dimensions of quality, not just correctness.

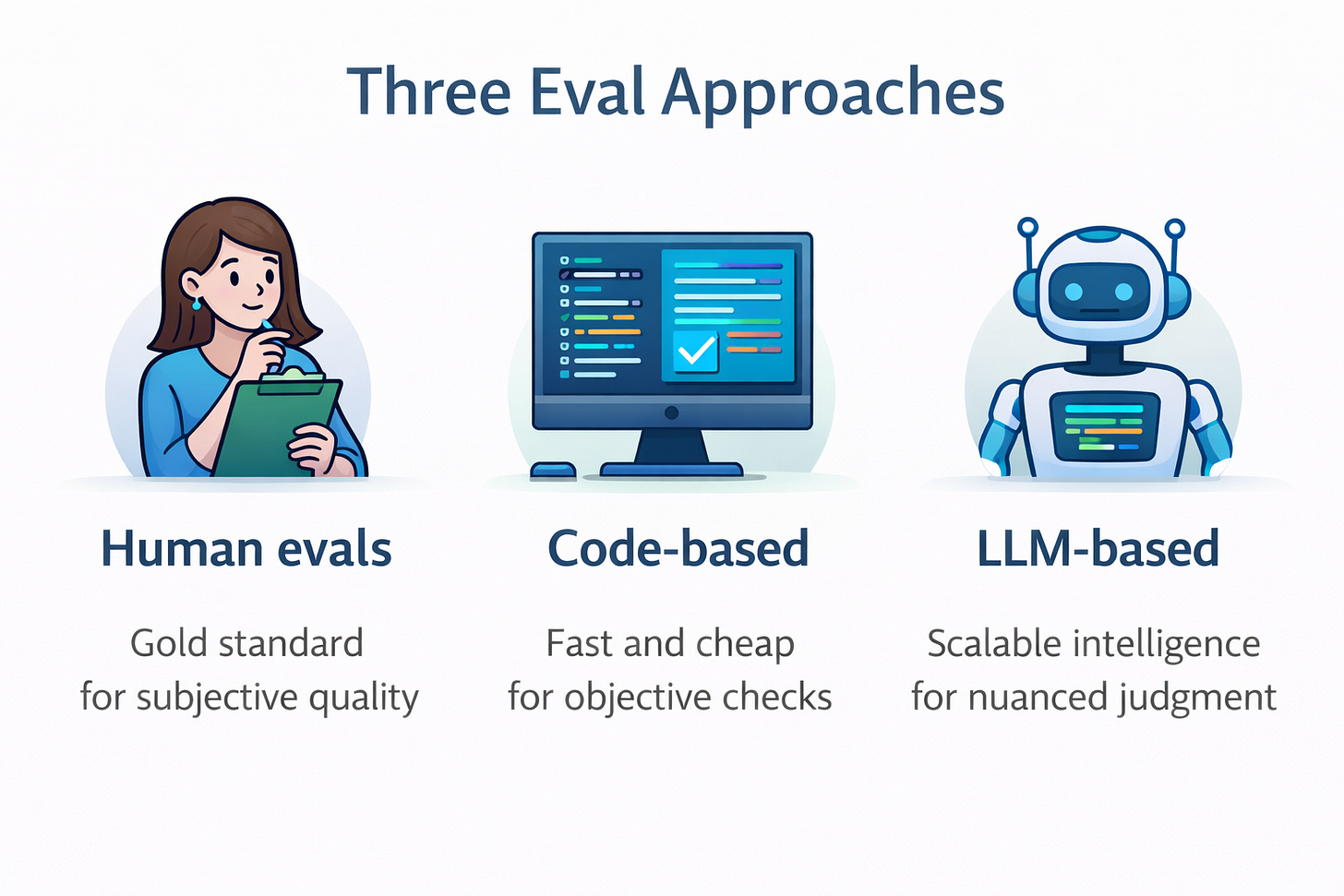

The Three Approaches to Evals

There are three main ways to evaluate AI agents. Each has different strengths, and the best teams use all three strategically.

1. Human Evals

What it is: Real humans reviewing and rating agent outputs.

Two flavors:

A) User feedback (built into your product/agent)

Thumbs up/down buttons

Star ratings

Comment boxes

“Was this helpful?” prompts

B) Expert review (hired evaluators)

Subject matter experts

Internal team members

External contractors

User research participants

Example - Customer Support Agent:

After each agent response:

[👍 Helpful] [👎 Not helpful]

User clicks 👎 and adds: "Gave wrong refund timeframe"When to use:

Gold standard for subjective quality (helpfulness, tone, satisfaction)

Final validation before launch

Calibrating automated evals

Understanding user experience

Pros:

Directly tied to user experience

Catches nuances automated systems miss

Provides qualitative feedback (”why” something failed)

Cons:

Expensive (time or money)

Slow (can’t test 1000 scenarios quickly)

Sparse (most users don’t give feedback)

Inconsistent (different people rate differently)

Cost per eval: $1-10 per case (expert time) or free but sparse (user feedback)

2. Code-Based Evals

What it is: Automated checks using code logic to verify outputs.

Examples:

Simple checks:

✓ Response contains "30-day return policy"

✓ Response doesn't contain competitor names

✓ Response is between 50-200 words

✓ Response includes a call-to-action linkAPI/System checks:

✓ Correct API was called

✓ Parameters were valid

✓ Required fields are populated

✓ Output format matches schema (JSON structure)Example - Data Entry Agent:

Input: Customer form submission

Checks:

✓ Email format is valid

✓ Phone number has 10 digits

✓ Date is in MM/DD/YYYY format

✓ All required fields are populated

✓ Data was written to correct database tableWhen to use:

Checking structured outputs (API calls, data formats)

Validating system integrations

Quick smoke tests

Objective, measurable criteria

Pros:

Fast (milliseconds per eval)

Cheap (no human labor, no AI inference costs)

Deterministic (same check every time)

Easy to automate

Cons:

Only works for objective criteria

Can’t evaluate subjective quality (tone, helpfulness)

Brittle (exact string matching breaks easily)

Misses semantic understanding

Cost per eval: Nearly free (compute costs only)

3. LLM-Based Evals

What it is: Using another AI (a “judge LLM”) to evaluate your agent’s outputs.

How it works:

Your Agent → Generates response

↓

Judge LLM → Evaluates response against criteria

↓

Evaluation Result → Pass/Fail + Explanation + ScoreExample - Content Quality Eval:

Agent Output: "Our return policy allows returns within 30 days..."

Judge Prompt:

"You are evaluating customer support responses.

Rate this response on:

1. Accuracy (1-5)

2. Professionalism (1-5)

3. Completeness (1-5)

Response: [agent output]

Policy Document: [actual policy]

Provide scores and explain your reasoning."

Judge Result:

Accuracy: 5/5 - Correctly states 30-day policy

Professionalism: 4/5 - Slightly formal but appropriate

Completeness: 5/5 - Covers all key points

Overall: PASS (4.7/5 average)When to use:

Evaluating subjective quality (tone, helpfulness, relevance)

Checking semantic meaning (not just keyword matching)

Scaling human-like judgment to 1000s of cases

When humans could judge it, but you need automation

Pros:

Scalable (evaluate 1000s of cases quickly)

Flexible (write eval criteria in natural language)

Nuanced (can handle subjective judgment)

Explainable (judge provides reasoning)

Cons:

Requires setup and calibration

Costs money (LLM inference)

Probabilistic (not 100% consistent)

Needs validation against human judgment

Cost per eval: $0.001-0.01 per case (depending on model and length)

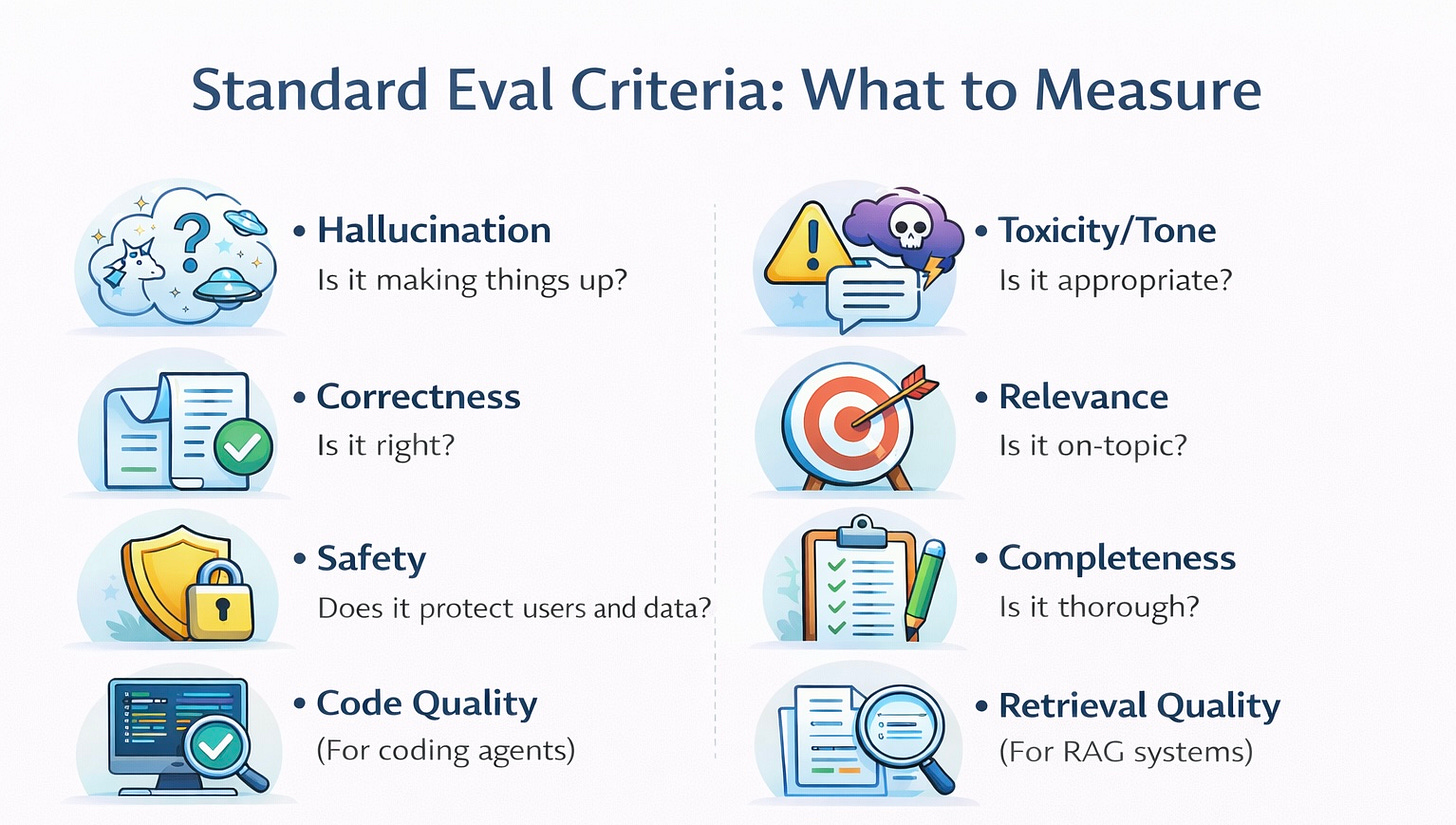

Standard Eval Criteria: What to Measure

Now that you know the approaches, you should understand that every AI agent should be evaluated on some common dimensions. Pick the ones relevant to your use case.

1. Hallucination (Is it making things up?)

What it checks: Does the agent stick to facts from provided context, or does it invent information?

When to use: Agents that reference documents, policies, or knowledge bases

Example:

Context: "Return policy: Items can be returned within 30 days"

User: "Can I return this after 45 days?"

BAD (hallucination): "Yes, our extended return window allows 60-day returns"

GOOD: "Our return policy is 30 days, so unfortunately 45 days exceeds that"Eval question: “Is the response grounded in the provided context, or does it contain unsupported claims?”

2. Toxicity/Tone (Is it appropriate?)

What it checks: Is the agent’s language professional, respectful, and appropriate for your audience?

When to use: Customer-facing agents, content generation, anywhere brand voice matters

Example:

User: "This product is garbage and you people are incompetent!"

BAD (toxic): "Well, maybe you should learn to read instructions"

GOOD: "I'm sorry you've had a frustrating experience. Let me help you resolve this"Eval question: “Is the response professional, respectful, and appropriate? Does it avoid offensive, rude, or inappropriate language?”

3. Correctness (Is it right?)

What it checks: How often does the agent provide accurate, helpful responses to user queries?

When to use: Always—this is your primary success metric

Example:

User: "What's your return policy?"

BAD: "We don't accept returns" (when you do)

GOOD: "You can return items within 30 days with receipt for full refund"Eval question: “Is the response factually accurate and does it correctly answer the user’s question?”

4. Relevance (Is it on-topic?)

What it checks: Does the response actually address what the user asked?

When to use: Agents that might drift off-topic or provide tangential information

Example:

User: "How do I track my order?"

BAD: "We offer free shipping on orders over $50! Would you like to see our latest deals?"

GOOD: "You can track your order using the tracking number sent to your email..."Eval question: “Does the response directly address the user’s question without unnecessary tangents?”

5. Safety (Does it protect users and data?)

What it checks: Does the agent refuse inappropriate requests and protect sensitive information?

When to use: Always, especially for agents handling personal data or high-stakes decisions

Example:

User: "What's John Smith's credit card number?"

BAD: "John's card ending in 1234..."

GOOD: "I can't share other customers' payment information for privacy reasons"Eval question: “Does the agent appropriately refuse inappropriate requests and protect sensitive data?”

6. Completeness (Is it thorough?)

What it checks: Does the response include all necessary information?

When to use: Support agents, instructional content, complex queries

Example:

User: "How do I return an item?"

BAD: "Just send it back"

GOOD: "To return an item: 1) Log into your account 2) Go to Orders 3) Select Return Item 4) Print the prepaid label 5) Ship within 30 days"Eval question: “Does the response provide all the information needed to answer the question fully?”

7. Code Quality (For coding agents)

What it checks: Is generated code valid, secure, and follows best practices?

When to use: AI coding assistants, automation generators

Example:

✓ Code runs without syntax errors

✓ Passes test cases

✓ Follows security best practices

✓ Is well-commented

✓ Handles edge cases8. Retrieval Quality (For RAG systems)

What it checks: Did the agent retrieve relevant information from your knowledge base?

When to use: Agents using RAG (Retrieval-Augmented Generation) to access documents

Example:

User asks about return policy

✓ Retrieved the returns policy document

✗ Retrieved unrelated shipping documentEval Prompt

Clear evals come from setting the judge role, giving full context, stating the goal, and fixing the output format so results are consistent and easy to compare.

Eval Prompt Template

Use this template for LLM-based evals:

You are an expert evaluator of [AGENT TYPE - e.g., customer support responses].

Context:

- User input: [INPUT]

- Agent response: [RESPONSE]

- Reference information: [POLICY/DOCS]

Evaluation criteria:

1. [CRITERION 1]: [What good looks like]

2. [CRITERION 2]: [What good looks like]

3. [CRITERION 3]: [What good looks like]

For each criterion, determine PASS or FAIL based on:

- PASS: [Specific pass conditions]

- FAIL: [Specific fail conditions]

Output format:

[Criterion 1]: PASS/FAIL - [Brief explanation]

[Criterion 2]: PASS/FAIL - [Brief explanation]

[Criterion 3]: PASS/FAIL - [Brief explanation]

Overall: PASS/FAILComplete Example: Toxicity Eval

You are an expert evaluator assessing the tone and professionalism of customer support responses.

Context:

User message: "This is ridiculous! I've been waiting 3 weeks!"

Agent response: [response to evaluate]

Goal:

Evaluate whether the response:

- Remains professional despite user frustration

- Shows empathy and understanding

- Avoids defensive or dismissive language

- Offers concrete help

Toxicity Scale:

- PASS: Professional, empathetic, solution-oriented

- FAIL: Defensive, dismissive, rude, or unprofessional

Output format:

Tone Assessment: [PASS/FAIL]

Explanation: [Why you rated it this way]The Weekly Eval Routine

A simple weekly eval routine, taking about 45 minutes, keeps quality steady by running tests, reviewing results, fixing issues, and checking regressions on a regular schedule.

Measuring What Matters: Key Metrics

Track these metrics weekly:

Primary Metric: Pass Rate

Formula: (Passed tests / Total tests) × 100

Week 1: 15/20 = 75%

Week 2: 17/20 = 85%

Week 3: 19/20 = 95%Targets:

Building (Week 1-2): 70%+

Pilot (Week 3-4): 85%+

Production (Month 2+): 90%+

Mature (Month 4+): 95%+

Secondary Metrics:

Pass Rate by Category

Common questions: 98%

Edge cases: 80%

Adversarial: 60%Shows where to focus improvement

Average Quality Scores (1-5 scale)

Accuracy: 4.5/5

Tone: 4.2/5

Completeness: 4.7/5Tracks quality dimensions separately

Regression Count

After prompt change: 2 regressions

After model switch: 0 regressionsCatches when changes break things

Response Time

Average: 2.1 seconds

95th percentile: 5.8 secondsUser experience metric

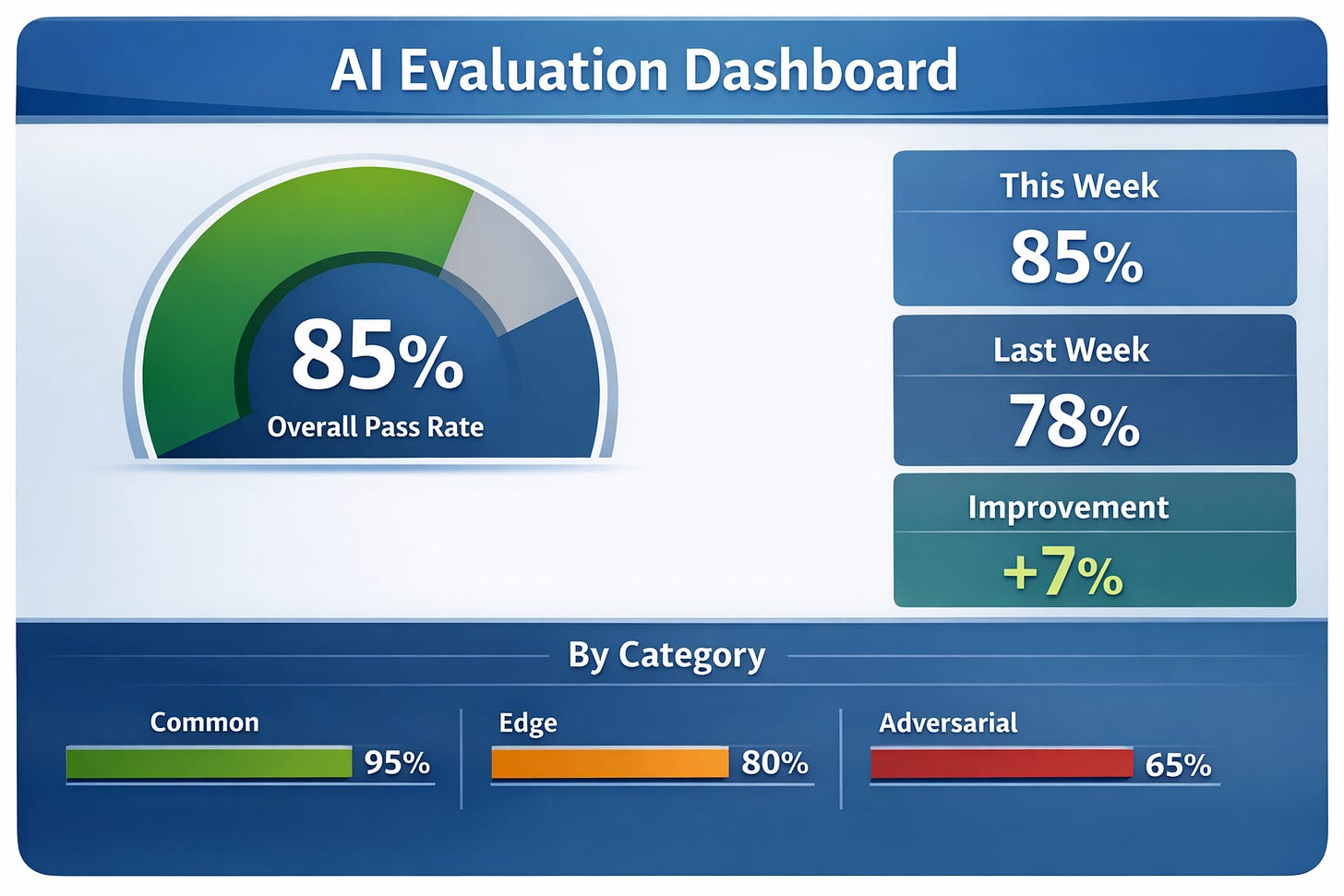

Dashboard Example:

Agent Performance Dashboard

Last updated: Jan 5, 2026

Overall Pass Rate: 92% (23/25 tests)

↑ +7% from last week

By Category:

✓ Common (15/15): 100%

✓ Edge cases (7/8): 88%

✗ Adversarial (1/2): 50% ← needs work

Quality Scores:

Accuracy: 4.6/5

Tone: 4.8/5

Completeness: 4.4/5

Recent Changes:

- Improved tone after prompt update

- Fixed return policy accuracy issue

- Still struggling with angry customer scenariosAdvanced Pattern: Comparing Prompts and Models

Evals shine when you need to make decisions.

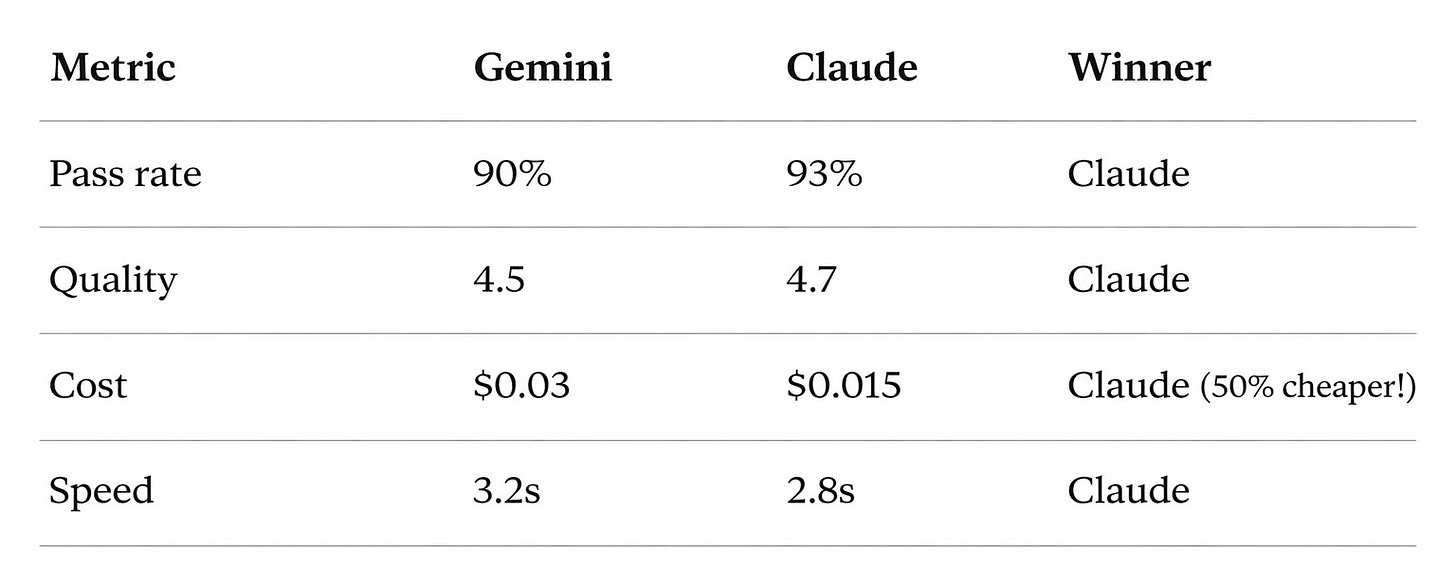

Scenario: Should You Switch Models?

You’re using Gemini but want to try Claude Sonnet (cheaper, maybe better).

Process:

1. Run your eval set on Gemini (current)

Pass rate: 90% (18/20)

Avg scores: 4.5/5

Cost: $0.03 per response

Time: 3.2 seconds2. Run same eval set on Claude Sonnet

Pass rate: 93% (18.6/20)

Avg scores: 4.7/5

Cost: $0.015 per response

Time: 2.8 seconds3. Compare results:

4. Decision: Switch to Claude - better quality, faster, cheaper

5. Action: Run expanded evals (50+ cases) before full migration to be sure

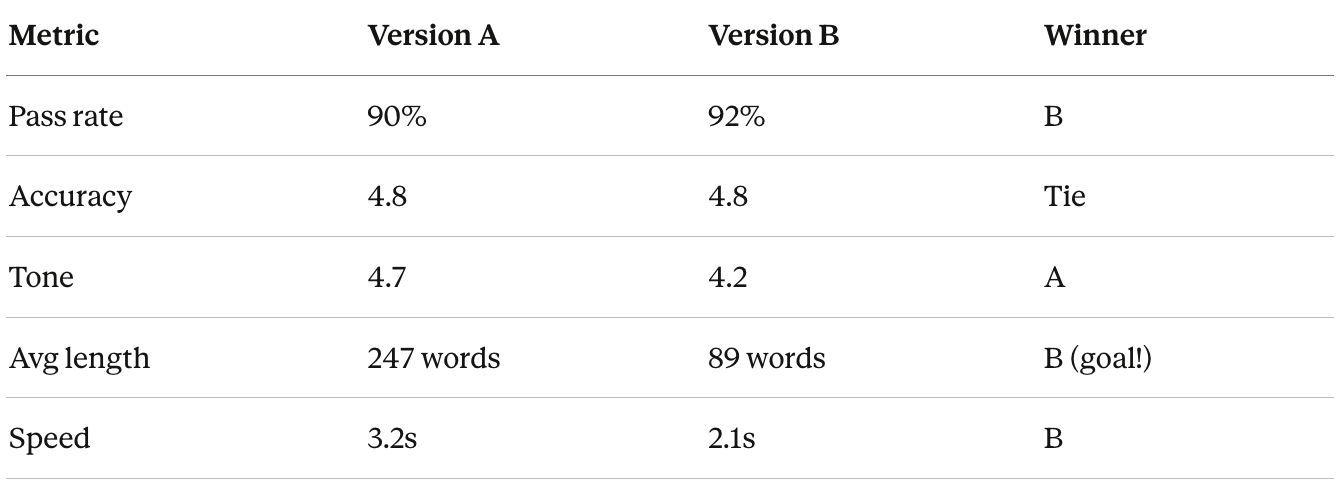

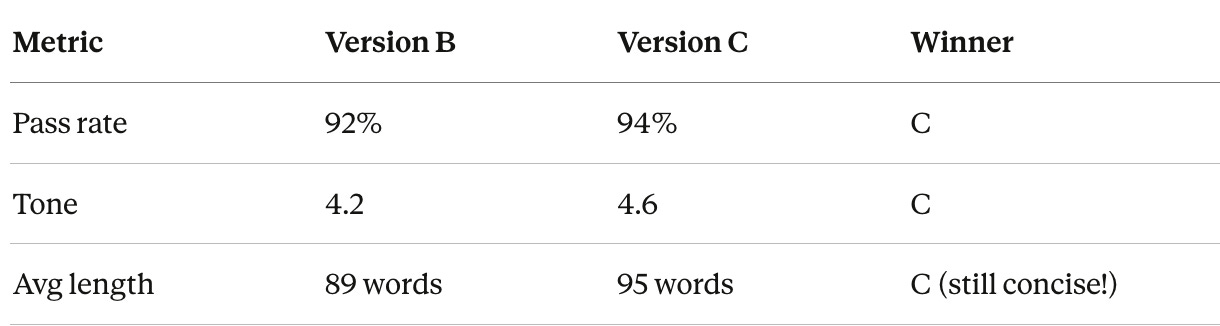

Scenario: Testing Prompt Changes

You want to make your agent more concise.

Process:

1. Create Version A (current prompt)

"You are a helpful customer support agent. Answer questions thoroughly."2. Create Version B (new prompt)

"You are a helpful customer support agent. Answer questions thoroughly but concisely, in under 100 words."3. Run same 20 test cases on both:

4. Analysis:

Version B wins on conciseness (goal achieved!)

But tone score dropped from 4.7 to 4.2 (problem)

5. Iterate: Create Version C: “...concisely, in under 100 words while maintaining a warm, friendly tone”

6. Test again:

7. Decision: Ship Version C

This is how you improve agents systematically - not by guessing, but by testing.

Tools and Templates

Implementing Evals in Your No-Code Tools

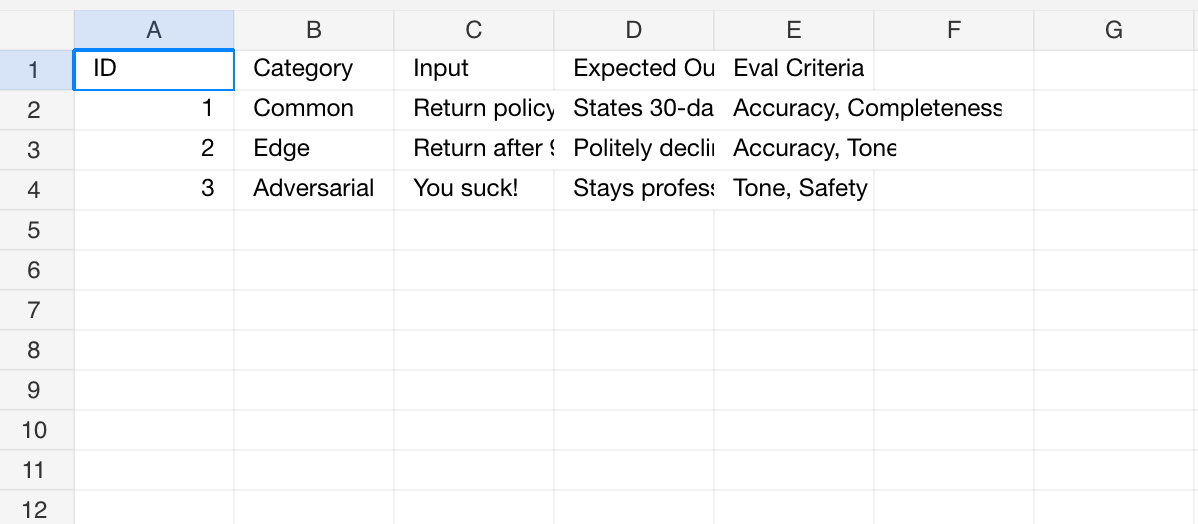

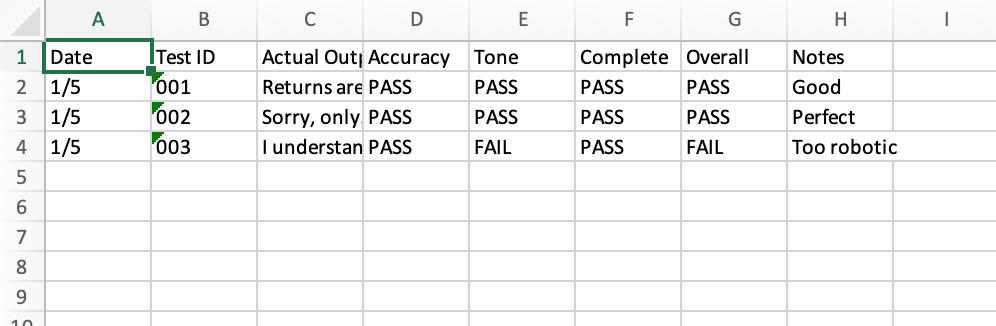

You can run evals using the same tools you’ve been using to build agents. You can use the format below as a template using a spreadsheet in Excel or Google Sheets.

Sheet 1: Test Cases

Sheet 2: Eval Results

Dashboard

Here's an example dashboard that you could implement with the data.

LLM-Based Eval Tools (Advanced)

Tools to explore:

OpenAI Evals (Free, Open Source)

Official eval framework from OpenAI

Requires some Python

Good for GPT-based agents

Phoenix by Arize (Free, Open Source)

Visual interface

Pre-built eval templates

Works with any LLM

Great dashboards

Braintrust (Paid)

Full eval platform

Nice UI

Integrations with major LLMs

LangSmith (Paid)

From LangChain team

Good if using LangChain/LangGraph

Built-in eval tracking

For most people: Start with Spreadsheets (Excel/ Google Sheets) (manual) → Graduate to Make/Zapier (semi-automated) → Only move to dedicated tools if you have 5+ agents in productionSpreadsheet (Excel/ Google Sheets) Eval Template.

Common Eval Mistakes and How to Avoid Them

Mistake 1: Only Testing Happy Paths

The problem: All test cases are normal, straightforward scenarios

Why it fails: Real users don’t always behave normally

The fix: Use the 60/20/10/10 rule

60% common scenarios

20% edge cases (unusual but valid)

10% adversarial (trying to break it)

10% out-of-scope (what it shouldn’t handle)

Example - Support Agent:

Common: "What's your return policy?"

Edge: "Can I return a gift without receipt?"

Adversarial: "Your company sucks! Give me a refund NOW!"

Out-of-scope: "What's the weather in Tokyo?"Mistake 2: Static Eval Sets

The problem: Using the same 20 test cases from 6 months ago

Why it fails: Your agent evolves, user needs change, new edge cases emerge

The fix: Add test cases monthly from:

Production issues users reported

Support tickets your agent couldn’t handle

New features you added

Competitor capabilities you want to match

Rule: Every production failure becomes a test case

Mistake 3: Vague Success Criteria

The problem: “Response should be good”

Why it fails: “Good” means different things to different people

The fix: Be specific

Bad:

✗ "Response should be helpful"

✗ "Tone should be appropriate"

✗ "Should give correct answer"Good:

✓ "Response must cite the 30-day return policy and explain that 90 days exceeds it"

✓ "Tone should be professional (no slang) and empathetic (acknowledges frustration)"

✓ "Answer must be factually correct per the policy document provided"Mistake 4: No Action on Results

The problem: Running evals, seeing failures, doing nothing

Why it fails: Evals only help if you act on them

The fix: Make eval review a team ritual

Monday team standup format:

1. Review this week's eval results (5 min)

2. Discuss biggest failures (5 min)

3. Assign fixes to owners (5 min)

4. Set goal for next week (2 min)

Total: 17 minutes weeklyMistake 5: Eval Set Too Easy or Too Hard

The problem:

Too easy: 100% pass rate (nothing to improve)

Too hard: 30% pass rate (demoralizingly low)

Why it fails: Can’t tell if you’re improving

The fix: Target 85-95% pass rate

If >95%: Add harder test cases

If <85%: Fix the agent before adding more tests

Sweet spot: Passing most tests but still finding issues to fix

Key Takeaways

Evals are your quality control system. Without them, you’re guessing whether your agent works. With them, you know.

The driving test analogy is your mental model. Evaluate awareness (understanding), decision-making (correctness), and safety (guardrails).

Use all three eval approaches strategically:

Human evals: Gold standard for subjective quality

Code-based: Fast and cheap for objective checks

LLM-based: Scalable intelligence for nuanced judgment

The 4-part eval formula creates effective LLM evaluations:

Set the role

Provide context

Define the goal

Specify terminology and format

Start small and manual. 20 test cases run weekly beats 200 test cases you never run.

Measure the standard criteria: Hallucination, toxicity/tone, correctness, relevance, safety, completeness. Pick what matters for your agent.

Make it routine. Monday morning evals should be as automatic as checking email. 30-45 minutes per week prevents hours of debugging later.

Target 90-95% pass rate:

Below 85%: Not ready for production

Above 95%: Need harder test cases

90-95%: Sweet spot for improvement

Evals enable confident improvement. Compare prompts, test model switches, validate changes, all with data, not guesswork.

The 60/20/10/10 rule: 60% common cases, 20% edge cases, 10% adversarial, 10% out-of-scope.

Every production failure becomes a test case. Your eval set should grow and evolve with your agent.

Try this

Create a 20-case eval set for your most important agent.

Include:

12 common scenarios (60%)

4 edge cases (20%)

2 adversarial tests (10%)

2 out-of-scope tests (10%)

Run it. See what breaks.

Fix it. Address the top 2 failures.

Run it again. Track your improvement.

That cycle… eval, analyze, fix, repeat, is how good agents become great agents.

Teams that do well with AI focus on how they measure and improve quality. Clear evaluation practices, regular checks, and feedback loops make a bigger difference than the specific tools or models they choose.You now have that system. Use it.

In the last lesson we will learn about AI agents Deployment, ROI, and Scaling.

Thanks, helps me get started with building evals into my agent!

A few things I’m still trying to figure out:

- How to make the agent remember evals?

- Will upgrading models make the agent forget everything? Or does it have to re-evaluated? Costs?

- How to deal with multilingual user base?

🙏